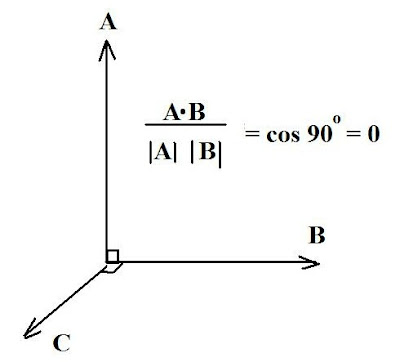

We now look at orthonormal bases. The term sounds esoteric but many would have encountered it before either in general physics or in advanced (AP) physics courses taken in high school. This would be in conjunction with the dot product of vectors, such as illustrated in Fig. 1.

Basically, in some Euclidean (straight line, 3D) system of coordinates, two vectors are considered orthogonal if their inner product is zero, as shown. The geometric properties here are assumed to be on the basis being orthonormal, i.e. composed of pairwise perpendicular vectors with unit length. Thus, in the figure shown, vectors A and B meet this condition (and the computation is shown for the vectors A, B) as do the vectors B and C. Then the vectors A, B, C meet the condition for an orthonormal basis. There are proofs available but those are beyond the scope of this blog.

Now, in applications of this concept, what the student is usually asked to do (say in his linear algebra course) is find an orthonormal basis for a "subspace" of R 3 (e.g. applied to a Cartesian space of three dimensions) which is generated by specified sets of vectors.

Example:

Find an orthonormal basis for the subspace of R 3 generated by the vectors (1, 1, -1) and (1, 0 ,1).

We let A = (1, 1, -1) and B = (1, 0, 1)

The orthonormal basis for A is just:

A/ ‖A ‖ = (1, 1, -1)/ {12 + 1 2 + (-1) 2 } = (1, 1, -1)/ Ö 3

The orthonormal basis for B is:

B/ ‖B ‖ = (1, 0, 1)/ {1 + 0 + (1)2 } =

(1, 0 , 1)/ Ö2

Of course, the beauty of linear algebra is that it can be generalized to Euclidean spaces beyond the mundane 3, hence we can look at subspaces in R 4, generated by sets of vectors (v1, v2, v3, v4).

Example:

Find an orthonormal basis for the subspace of R 4 , generated by the vectors:

A = (1, 2, 1, 0) and B = (1, 2, 3, 1)

For A we have the orthonormal basis:

A/ ‖A ‖ = (1, 2, 1, 0)/ {1 2 + 2 2 + 1 2 + 0 2 } =

Of course, the beauty of linear algebra is that it can be generalized to Euclidean spaces beyond the mundane 3, hence we can look at subspaces in R 4, generated by sets of vectors (v1, v2, v3, v4).

Example:

Find an orthonormal basis for the subspace of R 4 , generated by the vectors:

A = (1, 2, 1, 0) and B = (1, 2, 3, 1)

For A we have the orthonormal basis:

A/ ‖A ‖ = (1, 2, 1, 0)/ {1 2 + 2 2 + 1 2 + 0 2 } =

(1, 2, 1, 0)/ Ö6

For B we have:

B/ ‖A‖ = (1, 2, 3, 1)/ {1 2 + 2 2 + 3 2 + 1 2} =

For B we have:

B/ ‖A‖ = (1, 2, 3, 1)/ {1 2 + 2 2 + 3 2 + 1 2} =

(1, 2, 3, 1)/Ö 15

Practice Problems:

(1) Find the orthonormal basis for the subspaces of R 3 generated by the vectors:

A = (2, 1, 1) and B = (1, 3, -1)

(2) Find the orthonormal basis for the subspaces of R 4 generated by the vectors:

A = (1, 1, 0, 0)

B = (1, -1, 1, 1)

C = (-1, 0, 2, 1)

(3) Find an orthonormal basis for the subspace of the complex space C 3 generated by the vectors:

A = (1, -1, i)

and

B = (i, 1, 2)

Practice Problems:

(1) Find the orthonormal basis for the subspaces of R 3 generated by the vectors:

A = (2, 1, 1) and B = (1, 3, -1)

(2) Find the orthonormal basis for the subspaces of R 4 generated by the vectors:

A = (1, 1, 0, 0)

B = (1, -1, 1, 1)

C = (-1, 0, 2, 1)

(3) Find an orthonormal basis for the subspace of the complex space C 3 generated by the vectors:

A = (1, -1, i)

and

B = (i, 1, 2)

2 comments:

That the Simplex Algorithm (pivoting) revises the scalars on the “Tableau of Detached Coefficients” in the way one computes them with matrix-vector algebra is verified by experience only! The revision formula for i \neq i{0}

\bar{a_{i, j}} = ( a_{i, j}a_{i_{0}, j_{0}} - a_{i, j_{0}}a_{i_{0}, j} ) / a_{i_{0}, j_{0}}

with pivot a_{i_{0}, j_{0}} is not written in any textbook as if it is unnecessary. A “Fundamental Theorem of Simplex Algorithm” is due to be proven. Am I right?

You might find this paper useful

http://www.maths.ed.ac.uk/hall/RealSimplex/25_01_07_talk1.pdf

in pursuing your interest here- which is more in the field of linear programming than basic linear algebra per se (the series of posts is intended more for general linear algebra students)

Note also that merely because a result is "not written in any textbook" doesn't mean proof is "unnecessary". It may also mean you haven't yet found the text said proof is written (there are thousands of texts in linear programming, after all), or there are research (e.g. ArXiv papers) where it is elaborated on but not yet integrated into textbooks.

I also do not believe there is any equivalent "Fundamental Theorem of Simplex Algorithm" say on a par with the fundamental theorems of calculus or algebra.

Post a Comment